Handwriting analysis

Convert handwriting into text

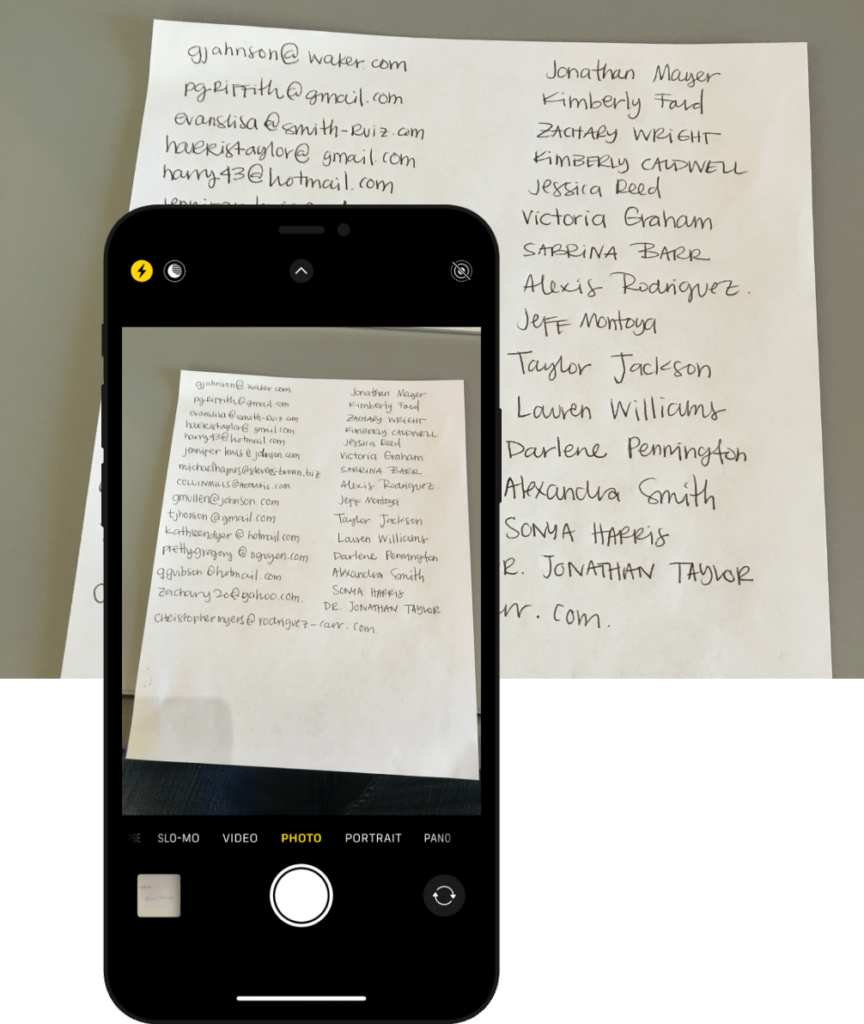

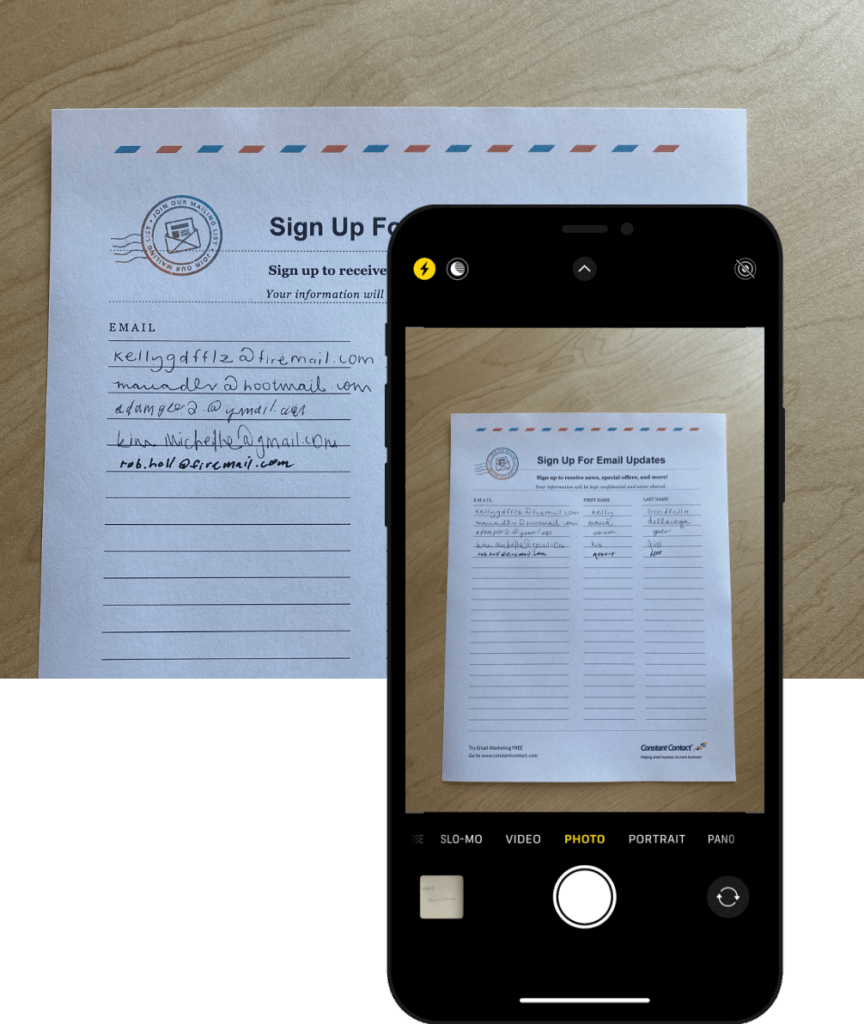

A billion-dollar client needed to convert thousands of handwritten names and email addresses into typed text so its marketing team could digitally store, sort, and search the contact information. The existing out-of-the-box solutions fell short in accuracy and optical character recognition, so the client came to us for a custom software development approach to solve this data challenge.

Training data challenge

Converting handwritten script to typed text continues to be one of the most vexing challenges in data science. A model that can automatically recognize and digitize handwritten notes and segment text within lists requires a complex deep learning neural network and a large dataset composed of millions of handwriting samples. Our AI software development and machine learning experts took up the gauntlet.

our role

- research + development

- create large training data set

- develop neural network model

- develop api

- develop js frontend to capture user correction

platforms

- keras

- tensor flow

- opencv

deliverables

- trained neural network model

- api

- source code

- training data synthesizer

Jobs to be done

- scan and convert handwritten notes to text

- sort and search handwritten data

- edit handwritten notes

- draw insights from handwritten text

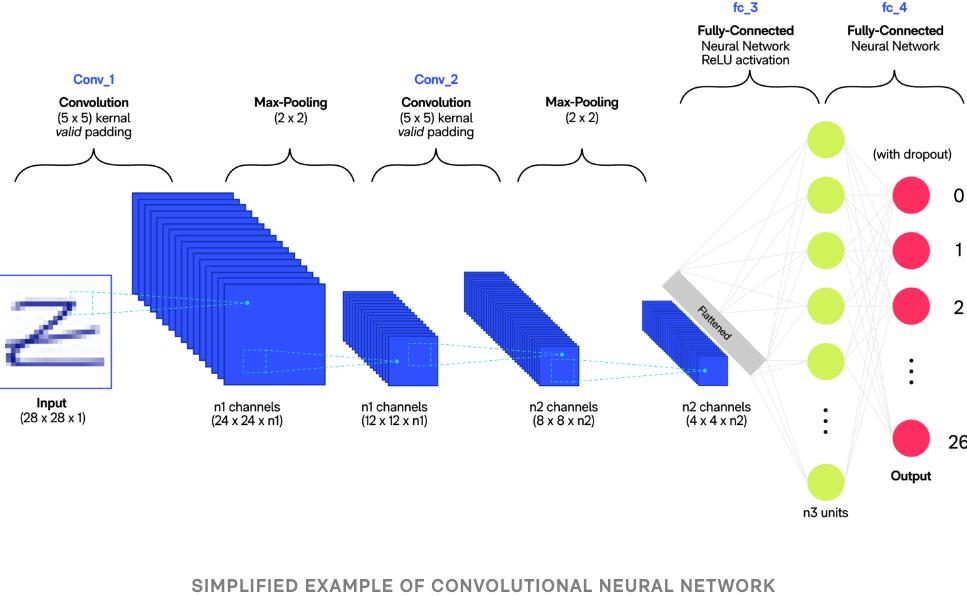

Deep learning neural network

To effectively convert images of handwritten text to typed text requires a deep learning recurrent neural network (RNN) and millions of handwriting records to serve as its training data. Our team of developers took a highly innovative approach to address the data challenge.

To train the neural network, we needed to provide the network with training data that it could read and analyze. A neural network would typically require millions of data points, which would take years to generate.

Instead of using a manual approach to generate the necessary handwritten character samples, our AI experts used a combination of script typefaces commonly used in platforms such as Microsoft, macOS, and Google as a starting point for training data. We then randomize the rendering of each character within these different typefaces, resulting in a scramble of fonts, varying in size, emphasis, and kerning within each word.

To further imitate scribbled handwriting, we added noise to the letters, making them dirtier, fuzzier, and less legible. The result was a data set of messy characters and many variations of each letter and word.

Pattern recognition

By auto-generating an extensive training data set, our development team was able to effectively apply a Long Short-Term Memory (LSTM) network to read easily legible handwriting at an 85% success rate and poor handwriting at a 60% success rate.